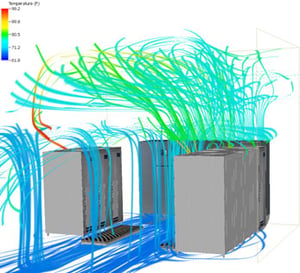

Since we’re in the digital mood today, here’s a less-than-140-character introduction to computational fluid dynamics (CFD): CFD takes a very complex fluid dynamics problem and breaks it up into tiny chunks that computers can solve.

We don’t quite have the computing power to predict where every molecule will go, but by breaking up a complex problem into discrete boxes and solving for the conditions of each box, computers can quickly and accurately simulate fluid behavior. This helps us to predict how air and heat will move around in a complex airflow network. For reasons that I hope are now obvious, this is extremely useful in data centers!

We don’t quite have the computing power to predict where every molecule will go, but by breaking up a complex problem into discrete boxes and solving for the conditions of each box, computers can quickly and accurately simulate fluid behavior. This helps us to predict how air and heat will move around in a complex airflow network. For reasons that I hope are now obvious, this is extremely useful in data centers!

Data center CFD helps answer detailed questions like:

- How much airflow are my servers really consuming?

- Do I need to pay for full aisle containment, or can I do just as well with end panels?

- How high can I set my cooling temperature to save energy?

- Do I really need this many CRACs?

- Do I really need this many perforated tiles?

- Is that column going to mess anything up?

I often encounter skepticism when it comes to the true impact of CFD in a data center. I try to counter this with two points. First, CFD is based on tested results from real-world components. For example, components in our data center CFD models are calibrated to actual vendor performance data. This provides a high level of assurance that the results in the model will match the conditions in the real world. On top of that, there are countless case studies of CFD saving data center owners thousands of dollars in wasted energy.

Second, if you’re going on a trip, would you rather have a general direction, or a map? If I wanted to go to Boston, I could just head North and maybe end up there, or I might end up in Vermont. Close, but not really where I wanted to go. If I have a map (or in the theme of the day, a GPS-enabled smart dashboard), I’ll make sure I get to Boston. Hopefully in time for a nice crab cake dinner.

In data center design, best practices are the general direction, and CFD is the map. Best practices will get me on my way to a good design. I know there are certain ways that servers need to be oriented, and I know general rules for the amount of air and cooling I need. But without a map, it’s very likely I’m going to over-design and put in too much equipment. This leads to wasted energy and often unintended consequences and imbalanced conditioning of my IT equipment.

If you’re designing or operating a data center, do yourself a favor and go get yourself a map!

A CFD Case Study

So what’s the best time to use CFD? Before you start building! CFD is a very useful tool for retrofitting existing data centers, but its best use is as a predictive tool before the first tile is installed.

In 2014, we had the good fortune of working with one of our clients to help them solve a data center problem. The Thomas Jefferson National Accelerator Facility, or Jefferson Lab, houses a large particle accelerator nearly a mile in length. They are also one of 17 national laboratories to be funded by the U.S. Department of Energy (DoE).

These fundamental traits create an interesting convergence for Jefferson Lab. As you can imagine, particle accelerator experiments crank out a lot of data. As a DoE-funded laboratory, energy-efficiency is also very important to their operations. It should come as no surprise then, that Jefferson Lab faced some critical decisions when it came time to expand their existing data center.

Shifting space constraints and expanding IT needs had necessitated that Jefferson Lab consolidate several data rooms into a single, central data center. As with many data centers, this had to be done with minimal disruption to operations and without a loss of computing capacity. To top it off, the PUE goal for the data center was established at 1.4; an aggressive number for the often hot and humid climate of coastal Virginia.

This required some careful thought and planning. By working directly with data center personnel, we were able to develop a detailed phasing plan and a conceptual design to safely and comfortably consolidate Jefferson Lab’s IT operations. But to provide a true map to a 1.4 PUE, we had to move beyond traditional design.

By meeting and planning directly with IT personnel, we developed a predictive CFD model of Jefferson Lab’s expanded data center. This allowed optimization of the proposed data center layout before relocating a single server.

The benefits of this optimization were tangible and achieved several key outcomes for our client. In completing predictive CFD, we were able to:

- Confirm the proposed cooling solution would maintain inlet conditions for all servers within ASHRAE recommendations

- Provide assurance of continuous cooling in the event of failure of a single CRAC unit

- Consolidate cold aisles to free space for future expansion

- Validate the proposed aisle containment solution for energy efficiency

- Establish server airflow limits to be used in IT equipment procurement

- Elevate the recommended cooling supply temperature to expand the availability of free cooling throughout the year

To tie it all together, we were able to feed the results of the CFD study back into our energy analysis engine. The data center airflow optimization, coupled with improvements to the chiller plant, were able to demonstrate a predicted annual PUE to below the 1.4 threshold.

The end result of this analysis was an optimized design, with the design team and client provided with much greater assurance that the retrofit would achieve the ultimate energy-efficiency goals of the project.

For more information, see CFD in Data Centers – A Case Study.